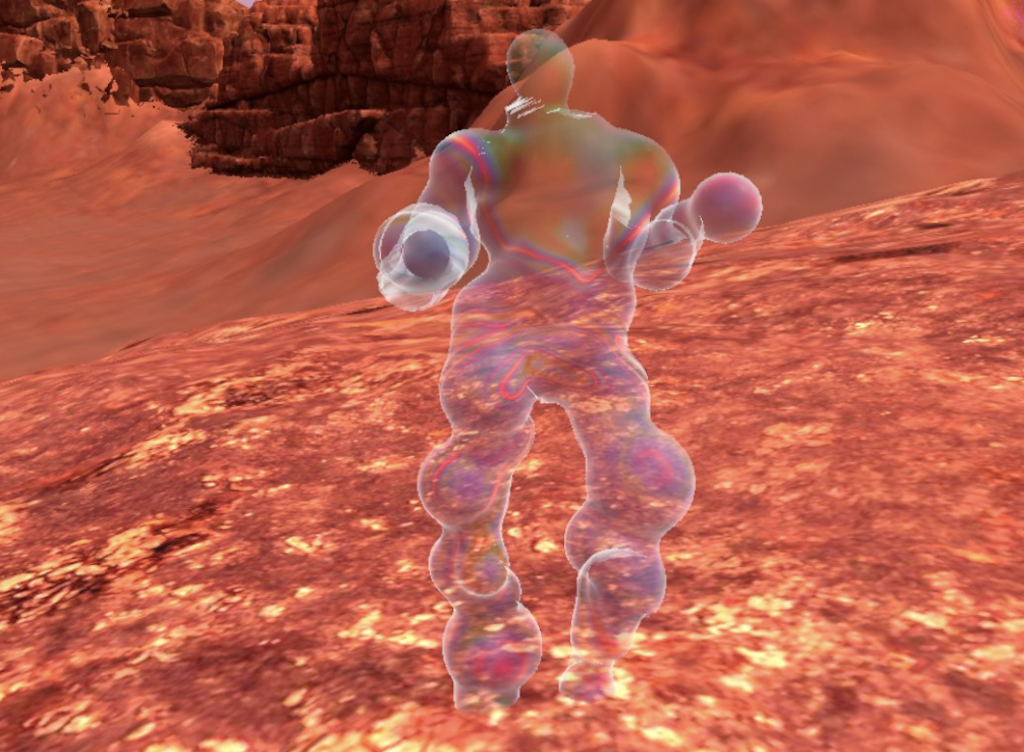

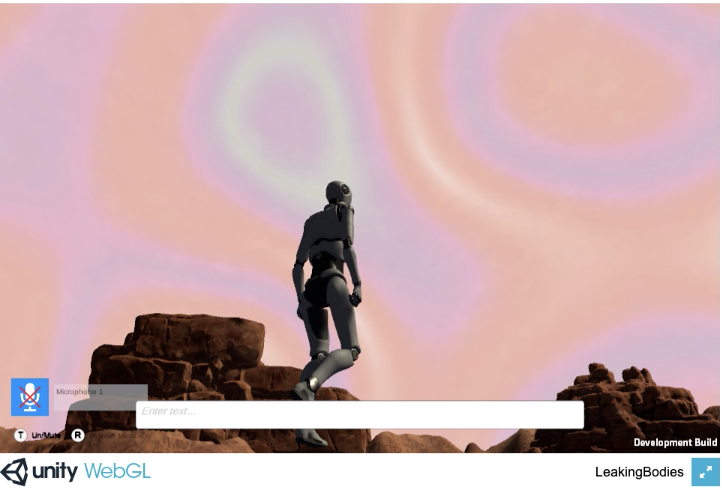

Out of 78 submissions, our Artistic Fellow Janne Kummer made it to the shortlist of 15 selected VR artworks with XBPMMM! The prize of DKB in cooperation with CAA Berlin is announced annually and curated by Tina Sauerländer.

The VR KUNSTPREIS 2023 is dedicated to artists who use virtual reality and expansive installations to create visions for social change.

More information and the entire shortlist is available here: https://vrkunst.dkb.de.

At the end of February 2023, a jury of experts will select the winners of the 5 working scholarships for 4,000 and 4 months each. The works will be shown for two months from the beginning of September 2023 in an exhibition at the Haus am Lützowplatz (HaL), Berlin.